Linux-Administration-I¶

System Administration - Linux Administration¶

Linux administration is the art and science of efficiently managing Linux-based computer systems. At its core, Linux administration involves overseeing and maintaining the health, security, and performance of Linux servers and workstations. It encompasses a wide range of tasks, from the installation and configuration of the Linux operating system to the management of users, software, and network services.

Table of Contents¶

Chapter 2: Managing Files From the Command Line

Chapter 3: Getting Help in Red Hat Enterprise Linux

Chapter 4: Creating, Viewing, and Editing Text Files

Chapter 6: Controlling Access to Files with Linux File System Permissions

Chapter 7: Monitoring and Managing Linux Processes

Chapter 14: Accessing Linux File Systems

Indtrocution¶

Linux, an open-source operating system known for its robustness and flexibility, powers a substantial portion of the world’s computing infrastructure, from web servers to supercomputers. As a Linux administrator, your responsibilities include setting up and maintaining servers, ensuring data security, resolving system issues, and optimizing performance to meet the demands of modern computing.

This discipline offers a rich and diverse landscape of knowledge and skills, ranging from mastering command-line tools and scripting to configuring network services and handling software deployment and updates. Linux administration is essential for businesses and organizations of all sizes, as it provides a stable and cost-effective foundation for their IT infrastructure.

As the guardians of the Linux ecosystem, administrators play a pivotal role in ensuring the reliable and efficient operation of Linux systems. They troubleshoot problems, automate routine tasks, and keep the Linux environment up to date with the latest security patches and software upgrades.

With the ever-expanding use of Linux in various domains, from cloud computing to Internet of Things (IoT) devices, Linux administration continues to be a critical and dynamic field. It offers a rewarding and challenging career path for those with a passion for open-source technology and a dedication to maintaining the digital engines that power the modern world. Whether you’re a seasoned professional or just beginning your journey into Linux administration, this field promises continuous learning and opportunities to contribute to the ever-evolving landscape of open-source computing.

What is System Administration?¶

System administration, often referred to as sysadmin or system administration, is a critical discipline in the field of information technology (IT). It involves the management, configuration, maintenance, and overall operation of computer systems and networks. System administrators, or sysadmins, are responsible for ensuring that IT infrastructure and services are running smoothly and efficiently to meet the needs of organizations and users.

The core tasks and responsibilities of a system administrator may include:

Task/Responsibilities |

Description |

|---|---|

Hardware Maintenance |

Sysadmins are responsible for the upkeep, repair, and replacement of hardware components such as servers, desktops, laptops, and network devices. This includes routine maintenance, hardware upgrades, and troubleshooting hardware issues. |

Software Installation and Maintenance |

Installing, configuring, updating, and maintaining software applications and operating systems on servers and workstations. This involves keeping software up-to-date with patches and security updates. |

Network Management |

Managing local area networks (LANs), wide area networks (WANs), and internet connectivity. Sysadmins are responsible for configuring routers, switches, firewalls, and ensuring network security. |

Security Management |

Implementing security measures to protect systems and data from unauthorized access and cyber threats. This includes managing user accounts, setting up access controls, and monitoring for security breaches. |

Data Backup and Recovery |

Developing and implementing data backup strategies to ensure data is safe and recoverable in case of system failures, data corruption, or disasters. |

User Support |

Providing technical support to end-users, helping them with hardware and software issues, troubleshooting problems, and resolving technical challenges. |

Server Administration |

Managing and maintaining servers, including web servers, file servers, database servers, and email servers. This includes server setup, configuration, and optimization for performance. |

Virtualization |

Utilizing virtualization technologies to create virtual machines (VMs) for efficient resource allocation, server consolidation, and scalability. |

Monitoring and Performance Tuning |

Monitoring system performance, identifying bottlenecks, and optimizing system resources to ensure efficiency and reliability. |

Automation and Scripting |

Creating and maintaining scripts to automate routine administrative tasks, making processes more efficient and less error-prone. |

User and Group Management |

Managing user accounts, permissions, and access control for various systems and services. |

Compliance and Documentation |

Ensuring that systems and processes adhere to industry standards and regulatory requirements. Maintaining comprehensive documentation of system configurations and procedures. |

Disaster Recovery Planning |

Developing and testing disaster recovery plans to minimize downtime and data loss in case of emergencies. |

Capacity Planning |

Analyzing current and future needs to allocate resources effectively, ensuring that systems can handle increasing workloads. |

Patch Management |

Keeping systems and software up-to-date with security patches, bug fixes, and updates to mitigate vulnerabilities. |

Sysadmins play a vital role in maintaining the reliability, security, and functionality of IT environments, whether in an enterprise setting, government agency, or small business. Their work ensures that computers and networks operate smoothly, reducing downtime, protecting data, and enabling users to perform their tasks effectively.

History of Operating Systems¶

Year |

Release |

|---|---|

1950s - 1960s |

The earliest computers had no operating systems. Users interacted directly with the hardware using machine language. Batch processing systems were developed to automate tasks. |

1960s - Early 1970s |

IBM’s OS/360 was a significant development during this period, providing a family of operating systems. Meanwhile, MIT developed the Compatible Time-Sharing System (CTSS), an early interactive OS. |

Late 1960s - Early 1970s |

AT&T’s Bell Labs created Unix, a groundbreaking operating system. Unix was written in C, making it portable across different hardware platforms. |

1970s - 1980s |

The rise of personal computers and workstations led to various operating systems, including Apple’s MacOS, Microsoft’s MS-DOS, and Microsoft Windows. |

Late 1980s - Early 1990s |

Linux, a Unix-like OS, was created by Linus Torvalds, based on the principles of Unix. It’s open-source and has since become a dominant force in the world of servers and embedded systems. |

1990s - 2000s |

The Windows operating system, with Windows 95, 98, NT, 2000, XP, and later versions, became the dominant OS for personal computers. |

2000s - Present |

Mobile operating systems like iOS and Android became essential for smartphones and tablets. Virtualization technologies, cloud computing, and containerization have reshaped the landscape. |

Unix & Linux¶

Aspect |

Information |

|---|---|

Unix |

Unix is an operating system developed in the late 1960s at AT&T’s Bell Labs.Unix is known for its multitasking and multi-user capabilities.It introduced many fundamental concepts in computing, like the shell, pipelines, and the hierarchical file system.Unix became the basis for many other operating systems, such as Linux and macOS. |

Linux |

Linux is a Unix-like operating system created by Linus Torvalds in 1991.It is open-source and can run on a wide range of hardware, making it highly portable.Linux distributions, or distros, package the Linux kernel with additional software and tools to create complete operating systems. Examples include Ubuntu, Fedora, and CentOS.Linux is widely used in servers, embedded systems, and supercomputers. |

Key Differences |

Unix refers to the original operating system developed at AT&T’s Bell Labs and its various descendants, including commercial Unix versions.Linux is a Unix-like kernel that is part of a complete operating system, usually provided by a Linux distribution.Unix systems are typically commercial and may have licensing costs, while Linux is open-source and often free to use.Linux has a more diverse and active development community, leading to rapid innovation and adaptation. |

Linux System Layers¶

Linux, like other modern operating systems, is organized into several layers, each serving a specific role in the system’s operation. These layers are typically organized as follows:

Layer |

Description |

|---|---|

Hardware Layer |

This is the lowest layer and consists of the physical hardware components of the computer, such as the CPU, memory, storage devices, and peripherals. |

Kernel Layer |

The kernel is the core of the Linux operating system. It directly interacts with the hardware and manages system resources, including CPU, memory, and I/O devices.It provides essential services like process management, memory management, and device drivers.The kernel is responsible for maintaining system stability and security. |

System Libraries Layer |

Above the kernel, system libraries provide a collection of functions and libraries that applications can use to interact with the kernel and perform common tasks.These libraries offer a level of abstraction for developers, making it easier to write software that can run on different hardware and Linux distributions. |

Shell Layer |

The shell is a command-line interface that allows users and scripts to interact with the operating system.It interprets commands and acts as a user interface to access system resources and execute programs.Common shells include Bash (Bourne Again Shell), Zsh, and Fish. |

User Space Applications Layer |

This layer includes all the software applications that run on top of the lower layers. These applications serve various purposes, from system utilities to user interfaces.Common user space applications include web browsers, text editors, office suites, and development tools.User space applications may interact with the kernel and system libraries to access hardware and system services. |

User Layer |

This is the topmost layer and includes user-generated data and configuration files.It encompasses user home directories, documents, settings, and preferences.Users directly interact with this layer to create, modify, and manage their data. |

These layers are interconnected, with each layer building on the capabilities and services provided by the layers below it. The kernel is the heart of the system, managing hardware resources and providing essential services, while user space applications and the user layer make Linux a versatile and powerful platform for a wide range of tasks and use cases.

Linux System Startup process¶

The startup process of a Linux system, also known as the boot process, is a series of steps that occur when the computer is powered on or restarted. Here’s a simplified version of what happens during the boot process:

process |

details |

|---|---|

BIOS/UEFI Initialization |

When you power on your computer, the Basic Input/Output System (BIOS) or Unified Extensible Firmware Interface (UEFI) firmware is the first software that runs.The firmware conducts a Power-On Self-Test (POST) to check hardware components like the CPU, memory, and storage devices.It then locates the boot device, typically a hard drive or SSD. |

Boot Loader |

The boot loader is responsible for loading the operating system. The most commonly used boot loader on Linux systems is GRUB (Grand Unified Bootloader).The boot loader displays a menu (if multiple operating systems are installed) and allows you to choose which OS to load.After selection, the boot loader loads the Linux kernel into memory. |

Kernel Initialization |

The Linux kernel is loaded into memory. It’s the core of the operating system.The kernel initializes hardware, sets up memory, and prepares the system for user-space programs.Kernel parameters can be passed to control its behavior. |

Init System (Systemd) |

In traditional Unix systems, the init process (e.g., SysV init) was responsible for starting system services.Modern Linux distributions use systemd as the init system. It’s responsible for managing system services and running scripts. |

User Space Initialization |

The init system (or systemd) starts the user space services and daemons.This includes setting up network connections, initializing hardware drivers, and other system-level tasks. |

Login Screen (Optional) |

If the Linux system is configured for graphical login, the display manager (e.g., GDM, LightDM) starts and presents a login screen to the user.The user enters their credentials, and upon successful login, a desktop environment is launched. |

User Session (Desktop Environment) |

In a graphical environment, the user’s desktop environment or window manager starts, providing the user with a graphical interface.In a text-based environment, the user interacts with a command-line interface (CLI). |

User Login |

The user logs in and can start using the system.At this point, user-specific services and applications can be initiated. |

The specific details of the boot process can vary depending on the Linux distribution and the system’s configuration. Some distributions use different init systems, and variations in the boot process may occur based on the hardware and software installed. However, the overall sequence remains consistent across most Linux systems.

What is RedHat?¶

Red Hat is a leading provider of open-source software solutions, particularly known for its Red Hat Enterprise Linux (RHEL) operating system. The book you mentioned appears to be a guide or training material for learning Linux administration using Red Hat Enterprise Linux.

RedHat Book Chapters¶

Let’s break down how you might approach learning the content of the first four chapters in detail:

Chapter |

Content |

|---|---|

Chapter 1: Accessing the Command Line |

This chapter is a fundamental starting point for Linux administration. It introduces you to accessing the command line, which is essential for managing Linux systems.You’ll learn how to use the local console and the GNOME desktop environment to interact with the command line.Understanding basic Bash shell commands and keyboard shortcuts is crucial for working in a command-line environment. |

Chapter 2: Managing Files From the Command Line |

This chapter covers the Linux file system hierarchy, which is critical for understanding where data and system files are located.You’ll learn how to locate files by name and use command-line tools for managing and manipulating files.Path name expansion and shell expansion are explored, which help you efficiently work with files and directories. |

Chapter 3: Getting Help in Red Hat Enterprise Linux |

Learning how to access and read documentation is crucial. You’ll explore commands like man and pinfo for accessing manual pages and other documentation.You’ll also discover how to get help from Red Hat, which includes creating and viewing system reports (SoS reports). |

Chapter 4: Creating, Viewing, and Editing Text Files |

Text file management is a fundamental skill. This chapter explores how to redirect output to files or programs, edit text files from the command line, and use graphical editors.You’ll work with tools like Vim for text editing. |

Chapter 5: Managing Local Linux Users and Groups |

Managing user accounts and groups is a key responsibility of a Linux administrator. This chapter covers user and group concepts, how to gain superuser access, and creating users and managing groups using command-line tools. |

Chapter 6: Controlling Access to Files with Linux File System Permissions |

Understanding file system permissions is crucial for securing your Linux system. You’ll learn how to interpret and manage file and directory permissions. |

Chapter 7: Monitoring and Managing Linux Processes |

Process management is a core task of a Linux administrator. This chapter delves into processes, controlling jobs, and killing processes.Monitoring process activity is essential for troubleshooting and performance optimization. |

Chapter 8: Controlling Services and Daemons |

Managing system services and daemons is a critical responsibility. You’ll explore how to identify automatically started processes and control system services using tools like systemctl. |

Chapter 9: Configuring and Securing OpenSSH Service |

This chapter covers configuring and securing the SSH service for remote access.SSH key-based authentication and customizing SSH service configuration are essential for securing remote access. |

Chapter 10: Analyzing and Storing Logs |

Understanding system logs is vital for monitoring and troubleshooting. You’ll learn about system log architecture, reviewing log files, and preserving the systemd journal.Maintaining accurate system time is also addressed. |

Chapter 11: Managing Red Hat Enterprise Linux Networking |

Networking is a fundamental part of Linux administration. This chapter introduces networking concepts, validating network configurations, configuring networking with nmcli, and editing network configuration files. |

Chapter 12: Archiving and Copying Files Between Systems |

Archiving and file transfer are practical skills. You’ll learn about managing compressed tar archives and securely copying files between systems. |

Chapter 13: Installing and Updating Software Packages |

Software management is a crucial aspect of Linux administration. This chapter covers attaching systems to subscriptions for software updates, managing RPM packages, and using yum for software installation and updates. |

Chapter 14: Accessing Linux File Systems |

Understanding file systems and devices is important. This chapter explores how to identify and mount file systems, make links between files, and locate files on the system. |

Chapter 15: Using Virtualized Systems |

Virtualization is a technology widely used in server environments. This chapter introduces managing local virtualization hosts and installing new virtual machines. |

Chapter 16: Comprehensive Review |

A comprehensive review chapter helps you consolidate and test your knowledge of the topics covered in the preceding chapters. |

Chapter 1: Accessing the Command Line¶

Let’s dive deeper into the first chapter, “Accessing the Command Line.” This chapter serves as an introduction to the command-line interface in Linux, an essential skill for any Linux administrator. I’ll provide you with a tutorial-style breakdown of the key topics covered in this chapter.

Section 1: Accessing the Command Line Using the Local Console¶

Info¶

Info |

Details |

|---|---|

Motivation |

Understanding how to access the command line from the local console is crucial. It provides you with a direct way to interact with your Linux system, which is essential for both system administrators and power users. |

Objective |

To introduce you to accessing the command line from the local console. |

Workspace |

Press Ctrl + Alt + F3 or Ctrl + Alt + F4 (or other function keys) to access a virtual terminal.You’ll be presented with a text-based login prompt, where you can enter your username and password to access the command line.At the command prompt, you can execute various Linux commands. |

Command: Ctrl + Alt + F3¶

Pressing Ctrl + Alt + F3 switches to the third virtual terminal, where you can log in and access the command line interface.

You can switch back to the graphical interface with Ctrl + Alt + F2.

Tips¶

Keep in mind that the command line is a powerful tool. Always double-check your commands before hitting Enter to prevent unintended consequences.

It’s essential to keep your username and password secure as this method of access provides full control of the system.

Use strong, unique passwords for security.

Remember to log out properly when done to free up system resources.

Section 2: Accessing the Command Line Using the Desktop¶

Info¶

Info |

Details |

|---|---|

Motivation |

Accessing the command line from the desktop environment is valuable when working on Linux systems with a graphical user interface (GUI). It allows you to switch between graphical and command-line tasks seamlessly. |

Objective |

To teach you how to access the command line from the desktop environment (GNOME). |

Workspace |

Open a terminal emulator from the GNOME desktop, you can typically find it in the applications menu or by using a keyboard shortcut, often Ctrl + Alt + T. |

Command: Ctrl + Alt + T¶

The terminal emulator provides a graphical interface for the command line.

You can run commands in the terminal just like you would in the local console.

Tips¶

Terminal emulators are highly customizable. You can change fonts, colors, and keyboard shortcuts to suit your preferences.

Familiarize yourself with keyboard shortcuts to launch the terminal quickly.

Learning to navigate the GUI using the command line can enhance your efficiency.

Section 3: Executing Commands Using the Bash Shell¶

Info¶

Info |

Details |

|---|---|

Motivation |

The Bash shell is the workhorse of the command line. It provides a powerful environment for executing commands, scripting, and automation. Understanding how to use it is essential for effective Linux administration. |

Objective |

To introduce you to the Bash shell and executing basic commands. |

Workspace |

Learn to run simple commands like ls (list files) and pwd (print working directory) to navigate and inspect the file system.Understand command structure and options. |

Commands: ls, pwd¶

Command |

Description |

Usage |

|---|---|---|

ls |

List files and directories in the current directory. |

ls [options] [directory] |

ls -l |

List files and directories in long format. |

ls -l [directory] |

pwd |

Print the current working directory. |

pwd |

The Bash shell is your command-line interface. You’ll use it to execute commands and manage the system.

Example commands include ls (list files) and pwd (print working directory). These commands help you navigate and inspect the file system.

Tips¶

Create aliases for frequently used commands to save time and reduce typing errors. For example, alias ll=’ls -l’ makes ll equivalent to ls -l.

Mastering basic Bash commands is crucial; these skills form the foundation of your Linux administration knowledge.

Use descriptive and meaningful file and directory names to make your work more manageable.

Section 4: Lab: Accessing the Command Line¶

Info¶

Info |

Details |

|---|---|

Motivation |

Hands-on labs are essential for reinforcing your learning and building confidence in your command-line skills. |

Objective |

A practical exercise to solidify your understanding of accessing the command line. |

Workspace |

Exercise accessing the command line both from the local console and within the GNOME desktop environment.Practice running basic commands and navigating the file system to become comfortable with the command-line interface. |

Clean Code Advice |

In your commands and scripts, use meaningful and self-explanatory variable and file names. Writing clear and concise code makes it easier to understand and maintain. |

Pro Programmer’s Tips |

Document your commands and their results for reference.Use version control tools to track changes in your scripts and configurations. |

Tips¶

Practice Regularly: The more you practice, the more proficient you become. Make the command line your playground and test various commands.

Embrace Mistakes: Don’t be afraid to make mistakes on the command line. It’s a great way to learn. If you make an error, you can often recover or start over.

Seek Additional Resources: Complement your learning with books, online tutorials, and Linux forums. Learning from a variety of sources can provide different perspectives and insights.

Keep a Cheat Sheet: Many Linux administrators maintain a list of common commands and shortcuts for quick reference. This can save you time and effort in your daily tasks.

Frequently Asked Questions (FAQ)¶

What is the command line, and why is it important in a Linux environment?

Answer: The command line is a text-based interface to interact with a computer’s operating system. It’s important in Linux for tasks like system administration, automation, and efficient file management.

Explain the difference between the local console and the desktop environment when accessing the command line.

Answer: The local console is a text-based interface directly on the system, while the desktop environment is a graphical user interface. The console is useful for system recovery and maintenance, whereas the desktop environment provides a user-friendly interface.

What is the purpose of the Bash shell, and how does it differ from other shells?

Answer: Bash is a command-line shell used for executing commands and scripts. It’s the default shell in most Linux distributions. Differences between shells include syntax and features.

How do you navigate through directories using the command line, and what are some common commands for file management?

Answer: Use commands like cd to change directories and ls to list files. File management commands include cp, mv, and rm.

Chapter 2: Managing Files From the Command Line¶

In this chapter, you’ll embark on a journey through the intricacies of managing files from the Linux command line. Effective file management is a fundamental skill for Linux administrators and users alike. Understanding the structure of the Linux file system, how to locate files, and mastering key file management commands are essential. From organizing your own projects to finding important data efficiently, these skills will serve you well in your Linux journey.

Section 1: The Linux File System Hierarchy¶

Info¶

Info |

Details |

|---|---|

Motivation |

Understanding the Linux file system hierarchy is fundamental to effective file and system management. It provides the structure for where data and system files are located. |

Objective |

Gain a deep understanding of the Linux file system hierarchy. |

Workspace |

Open a terminal emulator to access the command line, as you learned in the previous chapter. |

The Linux File System Hierarchy¶

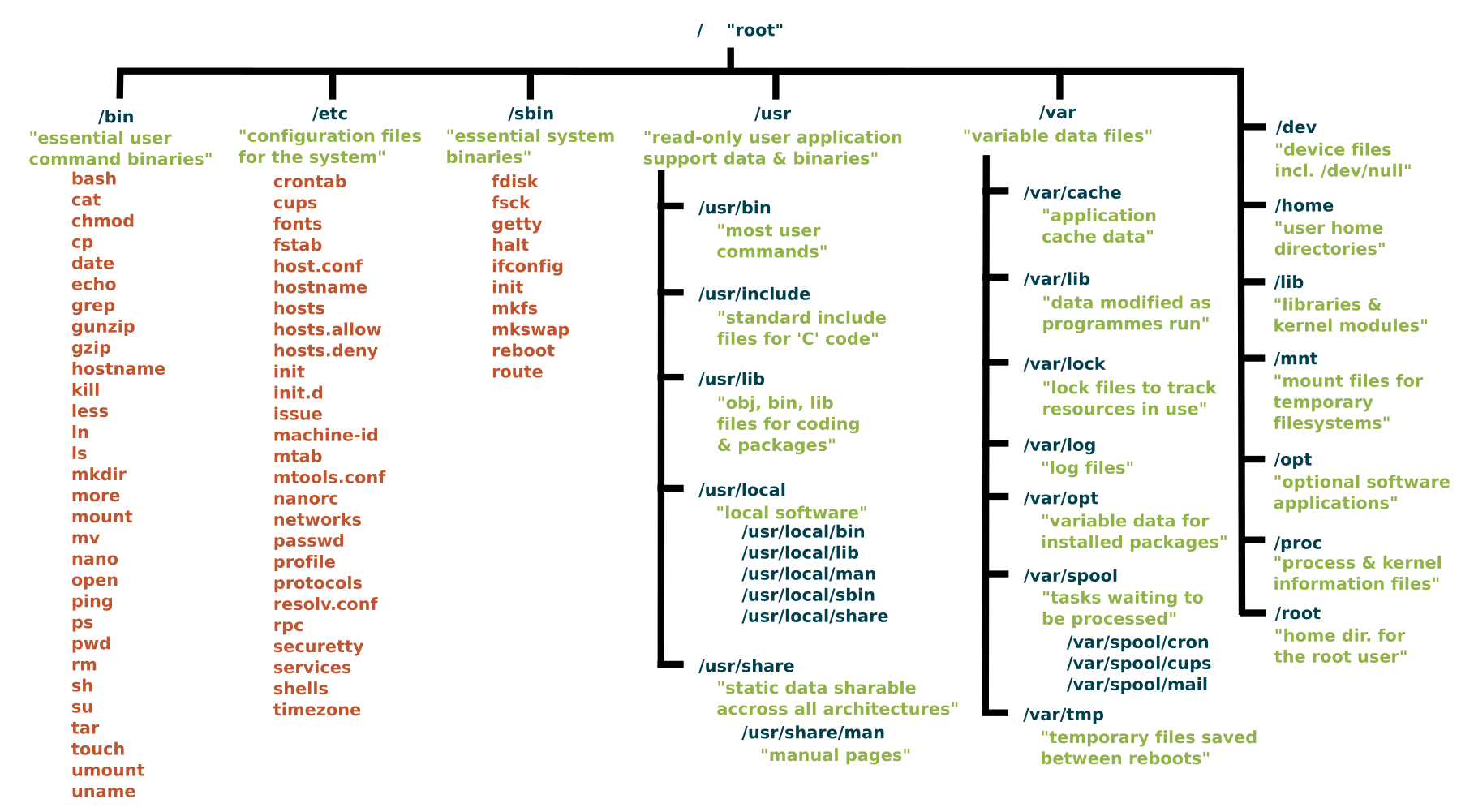

The Linux File System is organized logically into a hierarchical structure, which aims to ensure user-friendly and secure usage of the system. The organization provides both system-level protection and individual user privacy. Each direcotry serving a specific purpose.

The Linux file system hierarchy is a structured layout of directories and files, starting with the root directory /.

Key directories include /bin (system binaries), /home (user home directories), and /etc (system configuration files).

Common directories in the Linux file system hierarchy include:

Common directories in the Linux file system hierarchy include:

Directory |

Details |

|---|---|

/ (Root Directory) |

The root of the file system. Everything on a Linux system, including all files and directories, stems from this directory. |

/bin (Binary Programs) |

Essential binary files required for booting and repairing the system. It contains fundamental commands, such as ls (list files), cp (copy), and mv (move). |

/boot (Boot Files) |

Contains boot loader and kernel files necessary for system startup. |

/dev (Device Files) |

Contains special files representing devices connected to the system, such as hard drives, partitions, and peripheral devices.Within the /dev directory, you can find various devices, including disks and disk drives, which are known as block devices in Linux. The hard disks (mass storage devices) connected to the system are represented by device files such as /dev/sda (1,2,3, etc.), while USB devices are denoted by device files like /dev/usb (1,2,3, etc.). |

/etc (System Configuration Files) |

Houses system-wide configuration files and scripts. Important system configuration files, including network settings and software configurations, are stored here. These configuration files control various aspects of the Linux system, including network configuration, authentication, services, and hardware settings. |

/home (User Home Directories) |

Contains user home directories. Each user has a subdirectory in /home where they can store personal files and configuration settings. |

/lib (Libraries) |

Essential shared libraries, including the C programming code library, and kernel modules used by various programs. These files are essential in booting the file system and executing commands within the root file system.Shared libraries are identified by their file extension *.so. The Windows equivalent would be a Dynamic Link Library (.DLL). These libraries are vital for the normal functioning of the system. |

/media (Removable Media) |

Used for mounting removable media devices like USB drives and optical discs. When a removable device is attached, it is typically mounted in a subdirectory here. |

/mnt (Mount Points) |

A directory often used for mounting temporary file systems or network shares. System administrators can choose to mount various resources in subdirectories under /mnt. |

/opt (Optional Software) |

A location for optional or add-on software packages, often from third-party sources. |

/proc (Kernel and Process Information) |

A pseudo-file system that provides information about running processes and kernel parameters. It is used for interacting with and monitoring system processes. |

/root (Root User’s Home) |

The home directory of the root user, typically used for system administration tasks. |

/sbin (System Binaries) |

Contains essential system administration binaries. These binaries are required for system maintenance and recovery. |

/srv (Service Data) |

This directory is used for data related to services provided by the system, such as web server content. |

/tmp (Temporary Files) |

A directory for storing temporary files created by various applications and users. The contents of /tmp are typically volatile and often deleted on system reboot.By using the /tmp directory, programs can share temporary data without encumbering other directories, thus promoting system stability. |

/usr (User Binaries and Libraries) |

Contains user binaries, libraries, and data files. This directory can be shared among multiple systems over a network. The contents within the /usr directory are usually read-only. |

/var (Variable Data) |

Used for variable data files that change during the operation of the system, such as log files, mail spools, and other frequently changing data. It also includes system and application logs.Other subdirectories within the /var directory include /var/spool for storing data that is awaiting processing by programs or users, such as print jobs queues and mail queues; /var/tmp for holding temporary files that are typically not preserved during system reboots; and /var/cache, which contains cached data from applications. |

Tips¶

Use descriptive and well-organized directory structures for your own projects.

When navigating the file system, use tab completion to save time and reduce the risk of typos.

Section 2: Locating Files by Name¶

Info¶

Info |

Details |

|---|---|

Motivation |

The ability to locate files by name is essential for efficient file management and data retrieval. This skill saves time and effort when dealing with a large number of files. |

Objective |

Learn how to locate files by name. |

Workspace |

Continue using the terminal emulator. |

Command: find¶

Commnad |

Description |

|---|---|

find |

Used to locate files by its name |

The find command is a powerful tool for locating files by name. For example, find / -name myfile.txt searches for a file named myfile.txt starting from the root directory /.

You can refine your search by specifying directories, file types, and more.

find /path/to/search -name 'filename'

In this Command:

‘/path/to/search/’: Replace this with the directory where you want to start your search. The command will search for the specified file name and its variations starting from directory and its subdirectories.

‘“filename”’: Replace this with the name of file you’re looking for. You can use wildcards to search for files with a specific pattern. For example; if you want to find all files with a ‘.txt’ extention, you can use ‘”*.txt”’.

Here is an example to find all text files (files with a ‘.txt’ extention) in the users’s home directory

find /home/user -name "*.txt"

This command will search the /home/user directory and its subdirectories for files with names ending in .txt.

Keep in mind that the find command is very flexible and can be used to search for files using various criteria, not just by name. It’s a versatile tool for locating and working with files in a Linux or Unix system.

Tips¶

Use regular expressions to create more flexible and complex file search patterns.

Be cautious when searching from the root directory (/), as it may take some time to complete.

Section 3: Managing Files Using Command-Line Tools¶

Info¶

Info |

Details |

|---|---|

Motivation |

Learning to manage files using command-line tools is vital for Linux administrators. These tools provide efficient ways to handle files and directories. |

Objective |

Understand how to manage files using command-line tools. |

Workspace |

Stay within the terminal emulator. |

Commands: cp, mv, rm¶

Command |

Description |

|---|---|

cp |

Copies files or directories from one location to another.It creates a duplicate of the source file or directory in the destination. |

mv |

Moves or renames files or directories from one location to another.It changes the location or name of the source file or directory. |

rm |

Removes (deletes) files or directories.Be cautious, as deleted files are typically not recoverable. |

Commands like cp (copy), mv (move), and rm (remove) are used for common file management tasks.

For example, cp file1.txt /path/to/destination copies a file, mv file1.txt newname.txt renames a file, and rm file1.txt deletes a file.

Bash Codes Examples¶

# Copy a file from the current directory to another directory

cp file.txt /path/to/destination/

# Copy a directory and its contents to another directory (use -r for recursive)

cp -r source_directory/ /path/to/destination/

# Move a file to another directory

mv file.txt /path/to/destination/

# Rename a file (move it to the same directory with a different name)

mv oldname.txt newname.txt

# Move a directory and its contents to another directory

mv source_directory/ /path/to/destination/

# Remove a file

rm file.txt

# Remove a file forcefully without asking for confirmation

rm -f file.txt

# Remove a directory and its contents (use -r for recursive)

rm -r directory/

Tips¶

Please exercise caution when using the rm command, especially with the -r (recursive) option, as it can permanently delete files and directories. Double-check the paths and consider using -i (interactive) option to confirm each file deletion.

Use the -r option with cp and mv to work with directories and their contents.

Be cautious with the rm command, as deleted files are not typically recoverable.

Section 4: Matching File Names Using Path Name Expansion¶

Info¶

Info |

Details |

|---|---|

Motivation |

Path name expansion is a handy feature for quickly working with files based on their names. It simplifies file operations and saves time. |

Objective |

Explore path name expansion to match file names efficiently. |

Workspace |

Continue using the terminal emulator. |

Wildcards¶

In the Bash shell, there are several wildcards, also known as glob patterns, that you can use to match files and directories based on patterns. Here are the most commonly used wildcards:

Wildcard |

Description |

|---|---|

* (Asterisk) |

Matches any sequence of characters. For example, *.txt matches all files with a .txt extension. |

? (Question Mark) |

Matches any single character. For example, file?.txt matches files like file1.txt and fileA.txt. |

[ ] (Square Brackets) |

Matches a single character from a specified range or set. For example, [0-9] matches any single digit. |

[! ] (Exclamation Mark within Square Brackets) |

Matches any single character that is not in the specified range or set. For example, [!aeiou] matches any character that is not a vowel. |

{ } (Braces) |

Allows you to specify multiple patterns or options. For example, {file1,file2}.txt matches either file1.txt or file2.txt. |

** (Double Asterisk) |

Used for recursive globbing. It matches directories and their contents recursively. For example, **/*.txt matches all .txt files in subdirectories. |

These wildcards provide powerful pattern-matching capabilities when working with files and directories in the command line. You can use them with various commands, such as ls, cp, mv, and rm, to perform operations on files that match specific patterns.

Command Explanation:

Path name expansion is also known as “wildcards.” Common wildcards include * (matches any characters) and ? (matches a single character).

For example, cp *.txt /path/to/destination copies all files with the .txt extension to the destination directory.

Bash Code Examples using Wildcards¶

1- Matching All Files in the Current Directory:

To list all files in the current directory, you can use the * wildcard character, which matches any sequence of characters.

ls *

2- Matching Files with a Specific Extension:

If you want to list all files with a specific file extension (e.g., .txt), you can use the * wildcard with the extension.

ls *.txt

3- Matching Files with Variable Names:

You can use the ? wildcard character to match a single character. For example, to list files with names like file1.txt and file2.txt, you can use:

ls file?.txt

4- Matching Files with Variable Characters:

To match files with variable characters, you can use character classes in square brackets. For example, to list files with names like fileA.txt and fileB.txt, you can use:

ls file[A-B].txt

5- Combining Wildcards:

You can also combine multiple wildcards to create more complex patterns. For example, to list all files with a single-digit number in the name and a .txt extension, you can use:

ls *[0-9].txt

Tips¶

Be careful when using wildcards to avoid unintended operations.

Use the ls command with wildcards to preview which files will be affected by a command.

Frequently Asked Questions (FAQ)¶

Q: What is the default directory structure of the Linux File System?

The default structure of the Linux File System is a hierarchical structure that begins with the root directory. All other directories stem from the root directory.

Q: What is an inode and how is it used in the Linux File System?

An inode is an index node. It serves as a unique identifier for a specific piece of metadata on a given filesystem. Each piece of metadata describes what we think of as a file.

Q: How do I manage disk space in the Linux File System?

You can manage disk space using the Graphical User Interface (GUI). Disk space management can be done using a file manager, but you can also use the du (disk usage), and df (disk free) commands in the terminal.

Q: How is data organized in the Linux File System?

Linux uses devices to receive, send, and store data. Typically, devices correspond to physical hardware components like hard disks, USB sticks, or input/output devices such as a keyboard. However, some devices may not have a hardware component, and in such cases, the kernel provides pseudodevices that you can access as if they were physical devices.

When formatting a partition on a disk, the operating system writes the filesystem, as we have previously discussed, so that you can efficiently organize data within the logical filesystem hierarchy provided by Linux.

Q: What is the difference between the root directory and the home directory in Linux?

The root directory is the top most level of the Linux File System, and everything, including the home directory, falls under the root directory. The home directory contains the data for a particular user. Every user registered on the system will have their own named directory under the home directory.

Q: How do you create and delete files and directories in the Linux File System?

The creation and deletion of files and folders are possible through both the GUI and the command-line terminal. The GUI provides an intuitive approach where you can simply right-click anywhere and create a new folder.

Alternatively, in the terminal, you can use the mkdir command to create a new directory. Similarly, to create a new file, you can use the touch command.

Q: How do you locate files by name using the find command, and provide an example?

Answer: The find command searches for files by name in a specified directory. For example, to find all .txt files in the /home/user directory, you can use find /home/user -name “*.txt”.

Q: What is path name expansion, and how can you use wildcards to match file names?

Answer: Path name expansion allows you to use wildcards like *, ?, [ ], and { } to match file names based on patterns. For example, *.txt matches all files with a .txt extension.

Q: Explain the purpose of file permissions in Linux and provide an example of changing file permissions.

Answer: File permissions control who can access and modify files. You can change file permissions using the chmod command. For example, chmod 644 file.txt sets read and write permissions for the owner and read-only permissions for others.

Chapter 3: Getting Help in Red Hat Enterprise Linux¶

Abstarct Introduction¶

In Chapter 3, we explore the essential skill of seeking help and documentation in Red Hat Enterprise Linux. As a system administrator, it’s crucial to know how to access and utilize various resources to find solutions, troubleshoot problems, and learn about the system.

Section 1: Reading Documentation Using man Command¶

Info¶

Info |

Details |

|---|---|

Motivation |

Accessing the built-in manual pages is fundamental for understanding command usage and options. |

Objective |

Learn how to use the man command to access command documentation. |

Explanation |

The man command is a vital tool for accessing manual pages in Linux. These pages provide detailed information about command usage, options, and functionality. |

Command: man¶

Command |

Description |

|---|---|

man |

Displays the manual page for a specified command. |

To use man, simply type man followed by the command you want to learn about:

man ls

This command displays the manual page for the ls command. You can navigate through the manual using arrow keys, and press ‘q’ to exit.

Tips¶

Use the man command to quickly access command documentation.

Manual pages are organized into sections, so you may need to specify the section number (e.g., man 5 passwd for file format descriptions).

Section 2: Reading Documentation Using info Command¶

Info¶

Info |

Details |

|---|---|

Motivation |

Learn about an alternative documentation system, “info,” which provides more extensive documentation for certain commands. |

Objective |

Learn how to use the info command to access detailed documentation. |

Explanation |

The info command provides a more extensive documentation system compared to man. It’s often used for commands and concepts that require in-depth explanations. |

Command: info¶

Command |

Description |

|---|---|

info |

Displays detailed documentation for a specified command or concept. |

To access info pages, use:

info command

For example:

info tar

Tips¶

info pages are structured, and you navigate them using arrow keys and commands like ‘n’ (next) and ‘p’ (previous).

Some commands may have both man and info pages, allowing you to choose the depth of information you need.

Section 4: Getting Help From Red Hat¶

Info¶

Info |

Details |

|---|---|

Motivation |

Learn how to access support and resources from Red Hat for assistance with Red Hat Enterprise Linux. |

Objective |

Discover how to obtain support from Red Hat and create an SOS report. |

Explanation |

Red Hat offers various resources for support and assistance. You can access Red Hat’s customer support portal for documentation, knowledge base articles, and support tickets. Additionally, you can create an SOS report to provide system information for troubleshooting. |

Command: sosreport¶

Command |

Description |

|---|---|

sosreport |

Generate an SOS report for troubleshooting. |

To create an SOS report, use:

sosreport

This generates a compressed tarball containing system information that can be shared with Red Hat support.

Tips¶

Red Hat provides extensive support, including documentation, knowledge base articles, and customer support.

Creating an SOS report can be crucial for resolving complex system issues.

Summarize How to search for Command Information¶

Searching for information about a command in Linux is a common task, especially when you need to understand its usage, options, and examples. Here’s how you can search for information about a command:

1- Man Pages (Manual Pages):

The primary source of information for most Linux commands is the manual pages, or man pages. You can access the man page for a command by typing man followed by the command’s name. For example:

man ls

This will display a detailed manual page with information about the command’s usage, options, and often examples.

2- Info Pages:

Some commands have info pages in addition to man pages. You can access info pages by typing info followed by the command’s name. For example:

info cp

Info pages often provide more detailed and structured information compared to man pages.

3- Online Documentation:

Many Linux distributions provide online documentation that includes command references and guides. You can often access these resources via a web browser or by searching for “Linux command name documentation” in your favorite search engine.

4- Command Help:

Many commands have built-in help options that you can access by using the –help or -h switch. For example:

ls --help

This will provide a brief overview of the command’s options.

5- Online Forums and Communities:

If you’re facing a specific issue or have questions about a command, you can search online forums and communities like Stack Exchange, Linux forums, and Reddit’s Linux-related subreddits. Many experienced Linux users are willing to help.

6- Books and Documentation:

Linux books, guides, and documentation often include detailed explanations of various commands and their usage. You can consult these resources for in-depth information.

Frequently Asked Questions (FAQ)¶

Interview questions may focus on a candidate’s knowledge of accessing and utilizing various resources for seeking help and documentation. Here are some common interview questions related to this chapter:

Q: What is the purpose of the man command in Linux, and how do you use it to access command documentation?

Answer: The man command is used to access manual pages that provide detailed information about command usage, options, and functionality. To use it, you type man followed by the command you want to learn about. For example, man ls displays the manual page for the ls command.

Q: What is the difference between man and info documentation in Linux, and when would you use one over the other?

Answer: man and info are both documentation systems. man provides concise command documentation, while info offers more extensive documentation, often used for in-depth explanations. The choice depends on the depth of information required for a particular command.

Q: Explain how to access package-specific documentation stored in the /usr/share/doc directory.

Answer: Package-specific documentation is often stored in the /usr/share/doc directory. To access it, navigate to the directory and list available documentation packages with ‘ls’. You can then use commands like ‘less’ to view specific documentation files.

Q: What resources does Red Hat provide for obtaining support and assistance with Red Hat Enterprise Linux?

Answer: Red Hat offers various resources, including the customer support portal for documentation, knowledge base articles, and support tickets. You can also create an SOS report to provide system information for troubleshooting.

Q: What is the purpose of an SOS report, and how do you generate one in Red Hat Enterprise Linux?

Answer: An SOS report is used to gather system information for troubleshooting complex issues. To generate an SOS report, you can use the sosreport command. It creates a compressed tarball containing system information that can be shared with Red Hat support.

Chapter 4: Creating, Viewing, and Editing Text Files¶

Abstract Introduction:¶

Chapter 4 delves into working with text files in Red Hat Enterprise Linux. Text files are fundamental components for configuration, scripting, and system management. This chapter covers creating, viewing, and editing text files using command-line and graphical tools.

Section 1: Redirecting Output to a File or Program¶

Info¶

Info |

Details |

|---|---|

Motivation |

Understand how to capture and redirect command output to a file. |

Objective |

Learn how to use redirection to save command output to a file or send it to another program. |

Explanation |

Redirecting output allows you to save the results of a command or send them to another program or file. The > and >> operators are used for this purpose. Use > to create a new file or overwrite an existing one, and >> to append to an existing file. |

Commands: >, >>¶

Command |

Description |

|---|---|

> |

Redirect output to a file (overwrite if it exists). |

>> |

Append output to an existing file. |

# Redirect the output of a command to a file (overwrite if it exists)

command > output.txt

# Append the output of a command to an existing file

command >> output.txt

Tips¶

Use > to create a new file or replace the content of an existing file.

Use >> to add output to an existing file without overwriting it.

Section 2: Editing Text Files from the Shell Prompt¶

Info¶

Info |

Details |

|---|---|

Motivation |

Learn how to create and edit text files directly from the command line. |

Objective |

Understand the basics of text editing with command-line tools. |

Explanation |

Linux provides text editors like vi and nano for creating and editing text files from the command line. For example, to create a new file or edit an existing one: |

Commands: vi, nano¶

Command |

Description |

|---|---|

vi (vim) |

Opens the Vim text editor for file editing.Vim is a powerful, modal text editor with modes for navigating, inserting, and command execution. |

nano |

Opens the nano text editor for file editing.Nano is a straightforward and user-friendly text editor that provides basic editing capabilities. |

To create or edit a file with Vim or nano:

vi filename.txt

nano filename.txt

Tips:¶

vi and nano are popular text editors in Linux.

Use i in vi to enter insert mode for editing, and press Esc to exit insert mode.

Section 3: Editing Text Files with a Graphical Editor¶

Info¶

Info |

Details |

|---|---|

Motivation |

Explore the use of graphical text editors for editing text files. |

Objective |

Learn how to use graphical editors to work with text files. |

Explanation |

In a graphical environment, tools like Gedit or Mousepad provide a user-friendly way to edit text files. To open a file using Gedit: |

Command: getit, mousepad¶

Command |

Description |

|---|---|

gedit |

Opens the Gedit graphical text editor for file editing. |

mousepad |

Opens the Mousepad graphical text editor for file editing. |

gedit filename.txt

Tips¶

Graphical editors provide a more user-friendly interface for text editing.

Use keyboard shortcuts and menu options for common editing tasks.

Frequently Asked Questions (FAQ)¶

Interview questions may focus on a candidate’s ability to work with text files and text editors in Linux. Here are some common interview questions related to this chapter:

Q: Explain the purpose of text files in Linux and why they are essential for system administration.

Answer: Text files are fundamental for storing configuration data, scripts, and system-related information. They are essential for system administration because they allow for easy configuration management and automation.

Q: Describe the differences between command-line text editors like vi and nano and graphical text editors like Gedit. When would you use one over the other?

Answer: Command-line text editors are typically used in terminal environments, while graphical editors provide a user-friendly interface. vi and nano are often used in terminal sessions for quick edits, while graphical editors are preferred for more extensive editing tasks.

Q:How can you create a new text file using the command line, and what command would you use to open and edit it with vi?

Answer: You can create a new text file with touch and edit it with vi using the command vi filename.txt. To open and edit an existing file with vi, you’d use vi existing_file.txt.

Q: Explain the modes in the vi (Vim) text editor and how you switch between them.

Answer: Vim has three primary modes: Normal, Insert, and Visual. You switch between modes by pressing ‘Esc’ to go from Insert to Normal mode and ‘i’ to enter Insert mode. In Normal mode, you can execute commands.

Q: What are the advantages of using vim over other text editors, and how would you save and exit a file in Vim?

Answer: Vim is highly configurable, provides powerful text manipulation features, and has a strong community. To save and exit a file in Vim, press ‘Esc’ to enter Normal mode and then type :wq and press ‘Enter’.

Chapter 5: Managing Local Linux Users and Groups¶

Abstract Introduction¶

Chapter 5 explores the fundamental concepts of user and group management in Red Hat Enterprise Linux. Proper user and group management is essential for system security and access control. This chapter covers creating, managing, and configuring user accounts and groups.

Section 1: Users and Groups¶

Info¶

Info |

Details |

|---|---|

Motivation |

Understand the role of users and groups in Linux and why they are essential for system administration. |

Objective |

Learn the concepts of user and group management in Linux. |

Explanation |

In Linux, users and groups play a vital role in system security and access control.Users are individual accounts, while groups are collections of users with similar privileges.Users and groups are essential for controlling file access, permissions, and resource allocation. |

Term |

Description |

|---|---|

Users |

Individual accounts that can log in to the system. |

Groups |

Collections of users with similar privileges. |

Tips¶

Effective user and group management is crucial for ensuring system security and access control.

Regularly review and audit user accounts and group memberships to maintain system security.

Section 2: Gaining Superuser Access¶

Info¶

Info |

Details |

|---|---|

Motivation |

Understand the concept of superuser access and how it differs from regular user privileges. |

Objective |

Learn how to gain superuser access to perform administrative tasks. |

Explanation |

The superuser, often referred to as “root,” has elevated privileges and can perform administrative tasks that regular users cannot. To gain superuser access, you can use the su (substitute user) command or log in directly as the root user. |

Command: su¶

Command |

Description |

|---|---|

su |

Substitute user command for gaining superuser access. |

su -

This command allows you to switch to the root user, provided you know the root password.

Tips¶

Exercise caution when using superuser privileges, as they allow unrestricted access to the system.

Logging in as the root user should be done sparingly and with care.

Section 3: Managing Local User Accounts¶

Info¶

Info |

Details |

|---|---|

Motivation |

Understand how to create and manage user accounts for system users. |

Objective |

Learn how to create, modify, and delete user accounts using command-line tools. |

Explanation |

You can create, modify, and delete user accounts in Linux using commands like useradd, usermod, and userdel. For example, to create a new user: |

Commands: useradd, usermod, userdel, passwd¶

Command |

Description |

|---|---|

useradd |

Create a new user account. |

usermod |

Modify user account properties. |

userdel |

Delete a user account. |

passwd |

Set or change a user’s password. |

# To create new user:

useradd username

# To set a password for the user:

passwd username

Tips¶

Properly configure user account properties, including home directories, default shells, and group memberships.

Regularly audit and manage user accounts to ensure system security.

Steps to add User to a Group?¶

To add a user to a group in Linux, you can use the usermod command. The usermod command allows you to modify the properties of a user account, including the groups that the user belongs to.

To add a user to a group using the usermod command, you must specify the following:

The name of the user to add to the group

The name of the group to add the user to

For example, to add the user bard to the group developers, you would run the following command:

usermod -a -G developers bard

The -G option tells the usermod command to modify the user’s group memberships. The developers argument is the name of the group to add the user to.

The -a option in the usermod command tells the command to append the group to the list of groups that the user belongs to. This means that the user will be added to the group, but they will not be removed from any other groups that they belong to.

You can also use the groupadd command to add a user to a group. The groupadd command allows you to create and modify groups.

To add a user to a group using the groupadd command, you must specify the following:

The name of the group to create or modify

The name of the user to add to the group

For example, to add the user bard to the group developers, you would run the following command:

groupadd -f -m developers

The -f option tells the groupadd command to force the creation of the group, even if it already exists. The -m option tells the groupadd command to create a home directory for the group.

Once you have added the user to the group, you can check to make sure that the user has been added to the group by running the following command:

groups bard

This command will print a list of the groups that the user bard belongs to.

Frequently Asked Questions (FAQ)¶

Interview questions may assess a candidate’s knowledge of user and group management in Linux, a critical skill for system administration. Here are some common interview questions related to this chapter:

Q: Explain the purpose of user accounts and groups in Linux. Why is it essential to manage them effectively?

Answer: User accounts provide individual access to the system, while groups allow for the management of user privileges. Effective management is essential for security, access control, and resource allocation.

Q: What is the difference between a user and a group in Linux, and how do they work together for access control?

Answer: Users are individual accounts, while groups are collections of users. Groups are used to grant permissions to multiple users simultaneously, simplifying access control.

Q: How can you create a new user account in Linux, and what command would you use for this?

Answer: To create a new user account, you can use the useradd command. For example, sudo useradd newuser creates a new user account named “newuser.”

Q: Explain the concept of superuser access in Linux. How do you gain superuser access, and what precautions should be taken when using it?

Answer: The superuser, often referred to as “root,” has elevated privileges. To gain superuser access, you can use the su command. Precautions include using it sparingly, as it provides unrestricted access to the system.

Q: Describe how to set or change a user’s password in Linux. What command is used, and what security considerations should be kept in mind when managing passwords?

Answer: To set or change a user’s password, you can use the passwd command. Security considerations include using strong passwords, ensuring regular password changes, and protecting password hashes.

Chapter 6: Controlling Access to Files with Linux File System Permissions¶

Abstract Introduction¶

Chapter 6 delves into the critical aspect of file system permissions in Red Hat Enterprise Linux. Properly configured file permissions are essential for securing data and resources. This chapter covers the concepts of Linux file permissions and how to control access to files and directories.

Section 1: Linux File System Permissions¶

Info¶

Info |

Details |

|---|---|

Motivation |

Understand the significance of file system permissions in maintaining system security. |

Objective |

Learn how Linux file system permissions work and their role in access control. |

Explanation |

File system permissions in Linux determine who can access, modify, or execute files and directories. Permissions are assigned to three categories: owner, group, and others, and can be set as read (r), write (w), and execute (x). |

Permissions¶

Term |

Description |

|---|---|

Permissions |

Access control settings for files and directories. |

Linux file system permissions are a fundamental aspect of access control and security in the operating system. They dictate who can read, write, and execute files and directories.

Permissions Breakdown: r, w, x¶

Here’s a breakdown of the available permissions and what they mean:

Permission |

Description |

|---|---|

Read (r) |

For files: If a user has read permission, they can view the contents of the file.For directories: With read permission, a user can list the files and subdirectories within the directory. |

Write (w) |

For files: Users with write permission can modify the contents of the file or delete it.For directories: Write permission allows users to create, delete, and modify files and subdirectories within the directory. |

Execute (x) |

For files: Execute permission allows users to run the file as a program or script.For directories: Execute permission is necessary to enter the directory and access its contents. Without execute permission, a user can’t access files or subdirectories within it. |

Permissions Assignation: u, g, o¶

Permissions are assigned to three categories:

Assigned To |

Details |

|---|---|

Owner (u) |

These permissions apply to the user who owns the file or directory. |

Group (g) |

These permissions apply to the group associated with the file or directory. Files and directories can belong to a specific group, and all members of that group have these permissions. |

Others (o) |

These permissions apply to all users who are not the owner or in the group. |

Speical Permission: SUID (s), SGID (g)¶

Additionally, there’s a special permission called the “set user ID” (SUID) and “set group ID” (SGID). These permissions allow a user who runs the file to temporarily gain the privileges of the owner (SUID) or group (SGID) for the duration of the program’s execution.

SUID (Set User ID):

SUID is often used to give users the ability to execute a program with the permissions of the program’s owner, regardless of who runs it. It’s frequently used for programs that need elevated privileges, like passwd which allows users to change their passwords.

Let’s take an example of a program that can only be executed by the owner but needs SUID to run with elevated permissions:

# Create a simple C program (e.g., myprogram.c)

#include <stdio.h>

#include <unistd.h>

int main() {

uid_t uid = geteuid();

printf("Effective User ID: %d\n", uid);

return 0;

}

# Compile the program

gcc myprogram.c -o myprogram

# Set the SUID bit on the executable

# + sign indicates that you are adding a permission.

chmod +s myprogram

# Check the permissions

ls -l myprogram

In this case, when any user executes myprogram, it will run with the effective user ID of the program’s owner, not the user who runs it. You’ll see that the SUID permission is set in the file’s permissions when you run ls -l.

SGID (Set Group ID):

SGID is often used for directories to ensure that files created within the directory inherit the group ownership of the directory rather than the user’s default group.

Let’s create an example where a directory has SGID set:

# Create a directory

mkdir sgid_example

# Set the SGID bit on the directory

chmod g+s sgid_example

# Check the permissions of the directory

ls -ld sgid_example

# Create a file within the directory

touch sgid_example/myfile

# Check the group ownership of the created file

ls -l sgid_example/myfile

With SGID set on the sgid_example directory, when a user creates a file within it, the file inherits the group ownership of the directory. This can be useful for collaborative projects where multiple users need access to shared files.

Notation¶

Symbolic Notation¶

Symbolic notation is a human-readable representation of file permissions using letters and symbols. It consists of three parts: the user, the group, and others, each represented by ‘u,’ ‘g,’ and ‘o’ respectively. The permissions themselves are represented by ‘r’ for read, ‘w’ for write, and ‘x’ for execute. Here’s how it works:

Notation |

Description |

|---|---|

u |

stands for the user/owner |

g |

stands for the group |

o |

stands for others |

In symbolic notation, you can represent these permissions as a combination of letters (e.g., “rw-r–r–” for a file with read and write permissions for the owner and read-only permissions for the group and others).

In this notation:

“rw-” represents read and write permissions for the owner (user).

“r–” represents read-only permissions for the group.

“r–” represents read-only permissions for others.

# Create a sample file

touch sample_file

# Set permissions using symbolic notation

chmod u=rw-,g=r--,o=r-- sample_file

# Check the permissions

ls -l sample_file

Illustration:

When you use ls -l to view the permissions of the “sample_file,” you’ll see the symbolic notation:

-rw-r--r--

This means:

The owner (user) has read and write permissions.

The group has read-only permissions.

Others have read-only permissions.

You can use the same symbolic notation with the chmod command to set or modify permissions as needed. For example:

chmod u+w sample_file adds write permission for the owner.

chmod g-x sample_file removes execute permission for the group.

chmod o+rwx sample_file grants read, write, and execute permissions for others.

Numeric Notation¶

Numeric notation assigns values to each permission:

read (4)

write (2)

execute (1)

The sum of these values determines the overall permission value.

For example, if a file has permissions “rw-r–r–,” the numeric representation for the owner’s permissions (4 + 2) would be 6, and the numeric representation for the group and others (4) would be 4.

# Create a sample file

touch sample_file

# Set permissions using numeric notation

chmod 644 sample_file

# Check the permissions

ls -l sample_file

Why read = 4, write = 2, and excute = 1 ?¶

The values of 4 for read, 2 for write, and 1 for execute in numeric notation are historical conventions, and they are based on a system of binary representation of permissions. Let me explain:

In the binary numbering system, each digit is a power of 2, and the values are as follows:

2^0 = 1

2^1 = 2

2^2 = 4

2^3 = 8

These values are used because they correspond to different bits in a binary representation, making it easy to calculate and manipulate file permission values.

Here’s how it works:

Execute (x): This permission corresponds to the least significant bit (LSB) in a binary representation. Since it’s the smallest power of 2, it’s assigned the value 1.

Write (w): Write permission corresponds to the next bit, which is 2^1 = 2. In binary, it’s represented as “10.”

Read (r): Read permission corresponds to the next bit, which is 2^2 = 4. In binary, it’s represented as “100.”

Using these values, you can easily represent the different combinations of permissions in binary form. For example:

“111” would mean read, write, and execute permissions (4 + 2 + 1).

“101” would mean read and execute permissions (4 + 1), but not write.

Tips¶

Regularly review and adjust file permissions to ensure that access is appropriately restricted.

Use permissions to enforce the principle of least privilege, granting only the necessary access to users and groups.

Section 2: Managing File System Permissions from the Command Line¶

Info¶

Info |

Details |

|---|---|

Motivation |

Learn how to manage file system permissions using command-line tools. |

Objective |

Understand how to set and modify file permissions for files and directories. |

Explanation |

Permissions can be set and modified using commands like chmod for changing permissions, chown for changing ownership, and chgrp for changing group ownership. |

Commands: chmod, chown, chgrp¶

Command |

Description |

|---|---|

chmod |

Change file permissions. |

chown |

Change file ownership. |

chgrp |

Change group ownership. |

For example, to give read and write permissions to the owner of a file:

chmod u+rw filename

Same as explained in the above sections.

Tips¶

Use symbolic or numeric notation with chmod to set permissions.

Carefully consider the implications of changing file ownership and group ownership.

Section 3: Managing Default Permissions and File Access¶

Info¶

Info |

Details |

|---|---|

Motivation |

Understand how to control default permissions for newly created files and directories. |

Objective |

Learn how to manage default permissions and access for new files and directories. |

Explanation |

Default permissions for new files and directories can be controlled using the umask command. The umask value subtracts from the maximum permissions to set default restrictions. For example, to set a default umask of 077: |

Command: umask¶

Command |

Description |

|---|---|

umask |

Set default permissions for newly created files and directories. |

umask

The umask value determines the default permissions to be removed from newly created files and directories. In this case, “0002” restricts write permissions for group and others.

umask Value¶

The umask is represented by a four-digit number, where each digit represents a specific set of permissions:

Digit |

Details |

|---|---|

First Digit |

Represents the permissions for the owner (user). |

Second Digit |

Represents the permissions for the group. |

Third Digit |

Represents the permissions for others (users who are not the owner or in the group). |

Fourth Digit |

Represents special permissions (like the sticky bit), but it’s rarely used with umask. |

The value of each digit in the umask is subtracted from the maximum possible permissions (which is 7 for read, write, and execute) to determine the default permissions for newly created files and directories. Here’s how it works:

Possible Value |

Details |

|---|---|

0 |

No permission will be removed. It leaves the corresponding group with full permissions. |

1 |

Execute permission will be removed. This corresponds to the numeric value for execute (1), so newly created files or directories won’t have execute permission for that group. |

2 |

Write permission will be removed. This corresponds to the numeric value for write (2), so newly created files or directories won’t have write permission for that group. |

3 |

Both write (2) and execute (1) permissions will be removed. This means newly created files or directories won’t have write or execute permissions for that group. |

4 |

Read permission will be removed. This corresponds to the numeric value for read (4), so newly created files or directories won’t have read permission for that group. |

5 |

Read (4) and execute (1) permissions will be removed. This means newly created files or directories won’t have read or execute permissions for that group. |

6 |

Read (4) and write (2) permissions will be removed. This means newly created files or directories won’t have read or write permissions for that group. |

7 |

All permissions (read, write, and execute) will be removed. This leaves the corresponding group with no permissions on newly created files or directories. |

Here’s a breakdown of what each digit in “0002” represents:

Digit |

Description |

|---|---|

First Digit (0) |

This digit corresponds to the owner’s permissions. In “0002,” it’s set to “0,” meaning no permissions are removed from the owner. The owner can retain full read, write, and execute permissions. |

Second Digit (0) |

This digit corresponds to the group’s permissions. In “0002,” it’s set to “0,” meaning no permissions are removed from the group. The group can retain full read, write, and execute permissions. |

Third Digit (0) |

This digit corresponds to permissions for others (users who are not the owner and not in the group). In “0002,” it’s set to “0,” meaning no permissions are removed from others. Others can retain full read, write, and execute permissions. |

Fourth Digit (2) |

This digit corresponds to special permissions like the sticky bit. In “0002,” it sets the “write” permission to be removed from both the group and others. This means that newly created files and directories will not grant write permissions to the group or others. |

Tips¶

Use umask to enhance system security by restricting default permissions.

Adjust the umask value according to your specific security requirements.

Frequently Asked Questions (FAQ)¶

you may encounter a range of questions that assess your knowledge of file permissions and your ability to manage them effectively. Here are some frequently asked interview questions, including one tricky question:

Q: What are the three basic types of permissions associated with files and directories in Linux?

Answer: The three basic types of permissions are read (r), write (w), and execute (x).

Q: Explain the difference between the owner, group, and others permissions.